Chaos Reigns Within

Random adventures in Data Analytics

Thursday, 22 August 2024

Monday, 11 December 2023

Advent Of Code and Alteryx

It is now just over 5 years since I tweeted the below on X (Twitter):

Image from Alteryx community [2]

What is the Advent Of Code?

But what is this Advent Of Code (AoC)? And why do we care that people are solving it in Alteryx?

Advent Of Code was created by Eric Wastl in 2015[3] and is "an annual set of Christmas-themed computer programming challenges that follow an Advent calendar."[4] So not particularly data problems or designed to be solved by Alteryx, people solve them in all sorts of computer languages. Over the years I have solved them in many languages including Python, R, Scala and Rust. For myself as a software engineer I find it an interesting way of playing with a new language, learning its capabilities, strengths and weaknesses. And also as a way of seeing how other people write code.

So why solve them in Alteryx? Well the simplest answer is why not? Doing strange things with Alteryx has been something I have enjoyed doing for many years.

The second answer is because I can :) (Sometimes...) What I noticed back in 2018, and has held true since, is that Alteryx is very good at solving the early days of the AoC challenges. And this is in many ways due to how Eric designed the puzzles: the input to every problem is always a single text file and the answer (or output) is always a single integer. (Well two integers, as each day has two parts.) So however complex the puzzle, all of the input is always contained in a single text file, that has to be parsed into its parts, before you move on to solving the actual puzzle. And if there is one thing that Alteryx can do very well, it is parsing text files!

Where things become interesting is as the problems get more complex, we begin to run into the limits of Alteryx as a programming language. Which leads me to my next interesting question:

Is Alteryx A Programming Language?

This is an interesting question. It is certainly not marketed as a programming language. Typically we think of a programming language being made up of code and Alteryx is talked about as being "code free".

But in reality: yes, Alteryx is a programming language.

As the user drags and drops tools on to the canvas they are building up an xml document that represents the data transformation that they have defined. That xml (or code) is then executed by the Alteryx engine.

If we are going to be more specific we can say that Alteryx is a "Visual Programming Language" and it is a "Domain Specific Language". The domain in question being data analytics. And it is this domain which brings certain limits to the language which makes some of the Advent Of Code problems more challenging...

But Isn't Alteryx Turing Complete?

Well yes. I think it was Steve Ahlgren who famously stated that Alteryx was Turing complete if you used a rock to hold down the run button. But being Turing complete isn't actually that interesting for real world applications. My 10 year old daughter with a pen and a pad of paper is Turing complete. As is Excel [5]. As is "Magic: the Gathering" [6]. But none of those are going to be particularly good for writing arbitrary computer programs in. Turing completeness is a useful concept when we are looking at the theory of what computer algorithms can or can not do. It is not a great measure for what they can practically do with a reasonable amount of computing power and time.

Where are the limits of the Alteryx language?

I think there are two major limitations that you run into in trying to solve Advent Of Code problems in Alteryx: Types and Loops.

(Please add in the comments if you can think of more.)

Types

The only data type in the top level Alteryx language is the data table. This makes a lot of sense given our previous statement that is a Domain Specific Language for manipulating data, but does limit its capabilities when trying to use it as a more general purpose language. Of course within the data table there is a rich type system and usually you can work within the table to represent the data variables you need.

Loops

I think this is the big one, and usually the point where I give up on AoC in Alteryx for the year. Alteryx does have the concept of loops in the form of batch macros (loosely equivalent to a FOR loop) and iterative macros (loosely equivalent to a DO WHILE loop), but these can be difficult to configure (especially if you have some complex data variables you are manipulating in tables. But more of a problem on the practicality front they can take a long time to execute. I have built AoC solutions that have taken over 24 hours to execute. Generate rows can be a good way to avoid a macro solution and simulate a loop, but can sometimes bring different data size issues.

Conclusion

Day 9 is usually about as far as I get with Advent Of Code and Alteryx, before the busy-ness of family life and December overtakes life. (And having departed Alteryx last week, my trial Alteryx license will soon run out). So next year I will be completing AoC in a different language.

Good luck to everyone still playing!

Merry Christmas and a Happy New Year

Adam

References:

[1] Image Creator from Microsoft Designer (bing.com)

[2] Advent of Code 2023 (2023). https://community.alteryx.com/t5/Alter-Nation/Advent-of-Code-2023/ba-p/1211440.

[3] Advent of Code 2023 (2023). https://adventofcode.com/.

[4] Wikipedia contributors (2023) Advent of Code. https://en.wikipedia.org/wiki/Advent_of_Code.

[5] Couriol, B. (2021) 'The Excel Formula language is now Turing-Complete,' InfoQ, 2 August. https://www.infoq.com/articles/excel-lambda-turing-complete/.

[6] Churchill, A. (2019) Magic: The Gathering is Turing Complete. https://arxiv.org/abs/1904.09828.

Tuesday, 5 December 2023

The mountains are calling and I must go

Image generated with the assistance of AI [1]

...and I will work on while I can, studying incessantly." - John Muir, 1873 [2]

Some personal news to share with you all today: after almost 13 years working at Alteryx, the time has come for me to move on to new adventures.

My last day was December 1st 2023.

Thank Yous

I have to start with the thank yous in case some of you don't make it to the end. And after 13 years there is a lot of people to thank, so apologies in advance to all the people I miss.

My biggest thank you has to go to Ned Harding for not only creating the fantastic piece of software that is Alteryx Desktop Designer, but for giving me the opportunity to be a part of building it. Thank you for your mentorship and friendship over the years. And of course thank you to Dean Stoecker and Libby Duane Adams for creating this place I have called home for so long. It has been an amazing journey!

My First Alteryx

This is a hard thank you list to write, as Alteryx has changed so much since when I started to when I left, that in many ways it feels like I have worked for multiple different companies. Each with its own people and styles. And suddenly I am leaving all of them at once, even though some of the earlier incarnations are long gone.

I would like to thank all of the fine folk of the Boulder office and my "first Alteryx" for making myself and my wife so welcome when we first moved there back in 2011. There are too many to name you all but they include: Linda Thompson, Amy Holland, Tara McCoy Giovenco, Rob McFadzean, Rob Bryan, Catherine Metzger, Margie Horvath, Damian Austin, Nathalie Smith, Hannah Keller, Wendy Chow and Kim Hands.

The Spirit Of Alteryx

The next set of thank yous go to the people who embody the "Spirit of Alteryx" starting with Steve Algren and Linda Thompson (one of whom I think coined this phrase) for everything you do to keep that magic of what makes Alteryx special continue to burn. Especially in recent years at the Inspire conference and working with the ACEs. Which brings me to the ACEs: one of the most amazing groups of people I have the pleasure to know in my life. Some of my happiest moments have been in a room with these people, brainstorming problems and ideas of how to solve complex problems and make the product better. You are too many to call out individually, but I do have to thank Mark Frisch my team mate for the CReW macros and someone who embodies the Spirit of Alteryx in all that he does.

Projects

I will end with some thank yous for a few of my favourite projects over the years and for the good people who were there with me:

- The CReW macros - The project that I call my greatest success and failure at Alteryx - Mark Frisch, Chris Love, Joe Mako and Daniel Brun

- The AMP engine - The second generation massively multi-threaded Alteryx engine. One of the projects that I am most proud of. - Ned Harding, Scott Wiesner, Chris Kingsley, Sergey Maruda, Roman Savchenko and all of the amazing C++ engineers who have contributed to that project.

- The Black Pearl project - The one that got away... (Naming a project after a cursed pirate ship was perhaps in hindsight asking for trouble, but I still think in a parallel universe this ship still sails on and would have been a great feature.) - Boris Perusic, David Vonka and all of the good crew who sailed that short but exciting voyage with us.

- Control Containers - My swan song. Another one that I wasn't sure would make it out at times, but I am so happy that it did. My last big contribution to desktop designer. - The one and only Jeff Arnold who pulled it over the line with me.

Apologies again for all the people who I have not being able to mention by name. I thank all of you for your contributions over the years.

My Journey

It was over 15 years ago that I first discovered a product that I then called Alteryx made by a company called SRC, and that you would now call Desktop Designer made by a company called Alteryx. And it is fair to say that product has been one of the great loves of my life. If you have ever seen the film Good Will Hunting, there is a scene in it where Matt Damon's character (Will) is trying to explain to Mini Driver's character (Skylar) how he is so good at Maths:

Will : Beethoven, okay. He looked at a piano, and it just made sense to him. He could just play.Skylar : So what are you saying? You play the piano?Will : No, not a lick. I mean, I look at a piano, I see a bunch of keys, three pedals, and a box of wood. But Beethoven, Mozart, they saw it, they could just play. I couldn't paint you a picture, I probably can't hit the ball out of Fenway, and I can't play the piano.Skylar : But you can do my o-chem paper in under an hour.Will : Right. Well, I mean when it came to stuff like that... I could always just play. [3]

Well for me, when it came to Alteryx, I could always just play.

And what a journey that has taken me on. I have gone from a data analyst to a principal software engineer. From an individual contributor to a director of over 50 engineers. From my first Inspire, feeling absolutely terrified talking in front of 20 people to the London Inspire at Tobacco Dock, closing out the conference on the main stage with Ned.

I have laughed, cried (only once in the office), grown personally and professionally in more ways than I could ever have imagined, and made some life long friends who I am so happy are part of my life.

Please don't be a stranger. Always happy to meet up for a beer if you are ever in London, or jump on a zoom call and talk data and analytics.

Where Next?

Whenever someone posts a leaving post on LinkedIn there is always the comment that asks "where are you off to"? Well good readers that is another book that is yet to be told, and one that you will have to wait a little while to start reading. But for now let us say I am excited for a new adventure, and I will leave you with the Haiku from Alteryx past:

Chaos reigns within.

Reflect, repent, and restart.

Order shall return. [4]

Adam

References:

- [1] Image Creator from Microsoft Designer (bing.com)

- [2] Muir, Gifford, and Gifford, Terry. John Muir : His Life and Letters and Other Writings. London: Baton Wicks, 1996. Print. Page 190.

- [3] Affleck, B., Damon, M., Driver, M., Elfman, D., Escoffier, J.-Y., Sant, G. V., & Williams, R. (2015). Good will hunting. Berlin; Arthaus.

- [4] Early Alteryx unhandled exception error message. Original source unknown.

Wednesday, 8 November 2023

Introducing Our Latest Innovations: CReW Macros - Ensure Path and ZIP Output

Greetings, data enthusiasts and Alteryx aficionados! We are thrilled to unveil two exciting additions to our Chaos Reigns Within Alteryx Macro family. Say hello to "Ensure Path" and "ZIP Output," two remarkable macros designed to take your data workflow to the next level.

Available now on Alteryx Marketplace...

Key Take-Away:

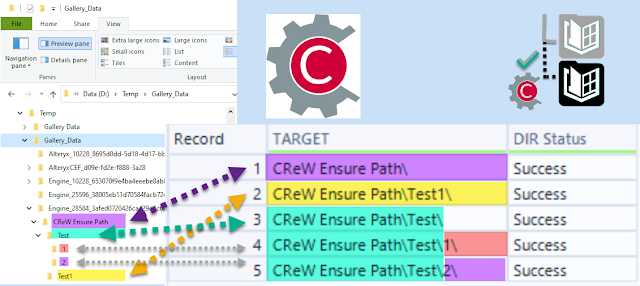

Ensure Path

Ensuring Smooth Sailing in Your Data Journey

Managing directory paths can be a daunting task, often leading to errors and disruptions in your data processing. But fear not! Our "Ensure Path" macro is here to simplify your life. Here's why it's a game-changer:

✅ **Dynamic Directory Checking**: This macro intelligently reads incoming directory or full-path file names and dynamically checks the existence of all distinct directories.

✅ **Versatile Path Handling**: Whether you're working with absolute or UNC (Universal Naming Convention) path types, Ensure Path seamlessly accommodates both.

✅ **Comprehensive Status Updates**: Stay in the loop with detailed status reports on all directories, empowering you to monitor your workflow with confidence.

✅ **Swift Error Resolution**: In case of path creation failures, the macro provides clear error messages, enabling quick identification and resolution of issues.

✅ **Workflow Automation**: Designed with a dynamic structure, Ensure Path seamlessly integrates into your workflows, enhancing automation capabilities and eliminating manual path management.

Ready to streamline your data workflow and bid farewell to path-related hassles? Download "Ensure Path" now: [https://bit.ly/CReW_EnsurePath](https://bit.ly/CReW_EnsurePath)

ZIP Output

Effortless File Archiving and Sharing**

If you've ever found yourself drowning in a sea of output files, our "ZIP Output" macro is here to rescue you. This macro simplifies file archiving and sharing:✅ **Space-Saving Magic**: Combine multiple output files into a single, compressed ZIP folder, reducing clutter and saving valuable disk space.

✅ **Simplified Sharing**: Easily share your results with colleagues, clients, or collaborators by sending a single, compact ZIP file.

✅ **Workflow Efficiency**: Automate the archiving process within your Alteryx workflows, ensuring a smooth and organized data pipeline.

Ready to streamline your file management and sharing? Download "ZIP Output" now: [https://bit.ly/CReW_ZIPOutput](https://bit.ly/CReW_ZIPOutput)

**Get Started Today!**

Empower your data journey with these innovative CReW Macros. You can access and download them directly from the Alteryx Marketplace using the following links:

📁 **Ensure Path**: CReW_EnsurePath

📁 **ZIP Output**: CReW_ZIPOutput

We believe that these macros will greatly enhance your Alteryx experience, making your data workflows more efficient and error-free.

Stay tuned for more updates and additions to our Alteryx Macro collection on ChaosReignsWithin.com. We can't wait to see how these macros help you conquer your data challenges! Happy data crunching! 🚀📊✨

Friday, 3 November 2023

Alteryx Obscura and Wordyx

[ Cross-posting from https://www.linkedin.com/pulse/alteryx-obscura-wordyx-adam-riley-lcmne ]

One of my favourite sessions at the Alteryx Inspire conference in recent years has been the fantastic "Alteryx Obscura" session invented by Alteryx's Steve Ahlgren. For anyone who hasn't seen the session: it is a series of lightening talks from Alteryx ACEs, employees and customers, making Alteryx do fun things that it was never designed to do.

For anyone familiar with my prior work of Chess, Fractals and Conway's Game Of Life in Alteryx, will find it no surprise that Obscura is my kind of session! If you haven't managed to see one of these sessions live at Inspire yet then the Polish User Group did a re-run which you can watch here.

My presentation from 2022 was Wordyx: an implementation of a rather well known word game inside of an Alteryx workflow, played with a text input and browse tool.

The implementation in Alteryx takes a surprisingly few number of tools!

We recently did an internal re-run here at Alteryx and I was asked if I could share the workflow from my Wordyx presentation, which I am very pleased to do here.

https://downloads.chaosreignswithin.com/Wordyx.yxzp

My 2023 presentation Blackjayx was less of a successful workflow (and not one that is yet in a state to share), but I did have the great pleasure of sharing a stage with a "virtual" Sir Ian Livingstone! Certainly a highlight of my career.

Adam Riley

Thursday, 5 October 2023

Announcing Alteryx Marketplace | CReW Macros Available via Alteryx!

Alteryx has launched their new Marketplace for Add-Ons.

The Alteryx Marketplace will be the place to find the latest and greatest for your data journey.

Featured Macros Include: Ensure Fields, Expect Equal, Expect Records, Expect Zero Records, Grouped Record ID, Control Container Error Check, Only Unique.

More are coming soon!

Ensure Fields https://bit.ly/CReW_EnsureFields

Expect Equal https://bit.ly/CReW_ExpectEqual

Expect Records https://bit.ly/CReW_ExpectRecords

Expect Zero Records https://bit.ly/CReW_Expect0Records

Grouped Record ID https://bit.ly/CReW_GroupedRecordID

Control Container Error Check https://bit.ly/CReW_ControlContainerErrorCheck

Only Unique https://bit.ly/CReW_OnlyUnique

Don't worry! CReW isn't going anywhere. We are simply making it easier for access to the best macro add-ons directly from Alteryx.

Cheers,

Mark (and Adam)

Please Subscribe to my youTube channel.

Wednesday, 24 May 2023

Control Containers - Part 2 - CReW Container Error Check Macro

In part 1 of this series we looked at the case where we didn't have an input hooked up to our control container in the logging container. This time we are going to look at what we can do with the input anchor connected.

How does the Input Anchor work?

- If the Input Anchor is disconnected

- All tools inside run as though they were in a regular container (or straight on the canvas)

- If the Input Anchor is connected

- If the input anchor receives 0 records – The tools in the Control Container DO NOT run

- If the input anchor receives >0 records – The tools in the Control Container run AFTER the last input record is received

Introducing the Container Error Check Macro

- If the incoming log messages contain an error all records get pushed out of F (Fail) output

- If incoming log messages have no errors all records get pushed out of S (Success) output

%20Screenshot.png)